Demo material

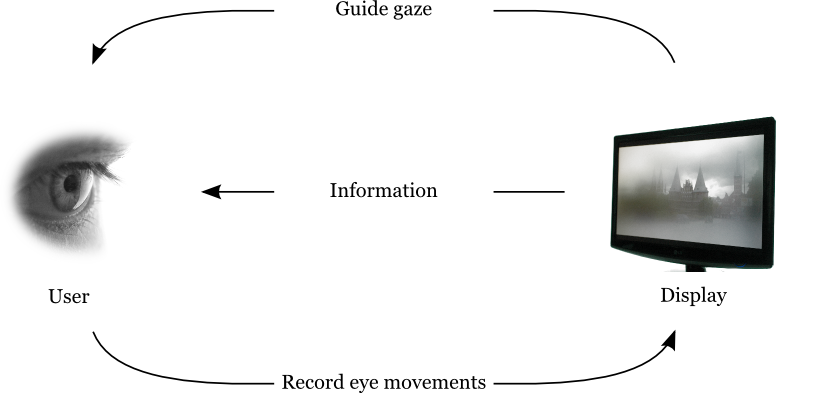

Gaze-contingent interactive displays

Gaze-contingent interactive displays (GCID) are devices that interact with the human observer to optimize visual communication systems. Such a display actively tracks the observer’s gaze position – where s/he specifically is looking - and changes its display output in order to guide the observer’s gaze to task-relevant locations. We coined the term Gaze Guidance for this type of human-computer interaction. We imagine that GCIDs can be useful in a wide variety of tasks, such as working at a PC display, analyzing medical images, interacting with virtual worlds, or even driving a car (cockpit).

Here we show some results from our research:

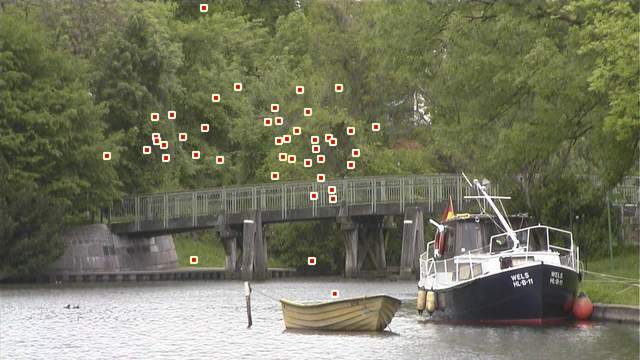

Eye movements on natural videos

A video of a canal scene near Lübeck, Germany. Overlaid are the gaze positions of 46 observers: in a relatively uneventful scene, observers look anywhere. However, when a swarm of ducks flies across the scene, every observers’ gaze is drawn to a single spot.

GCID with local contrast reduction

On this gaze-contingent interactive display, contrast was reduced at

some interesting locations to render them less distracting, that is to

manipulate the observer's gaze to other, potentially interesting

locations.

Gaze guidance to teach experts' skills in the natural sciences

This video, with a voice-over by a biology professor, is used to teach biology students about different fish locomotion patterns (here: balistiform locomotion). To understand and learn the specific pattern, an observer's gaze is directed to the relevant image region by blurring the irrelevant (and potentially distracting) regions.

This experiment was performed in collaboration with the Knowledge Media Research Center, Tübingen.

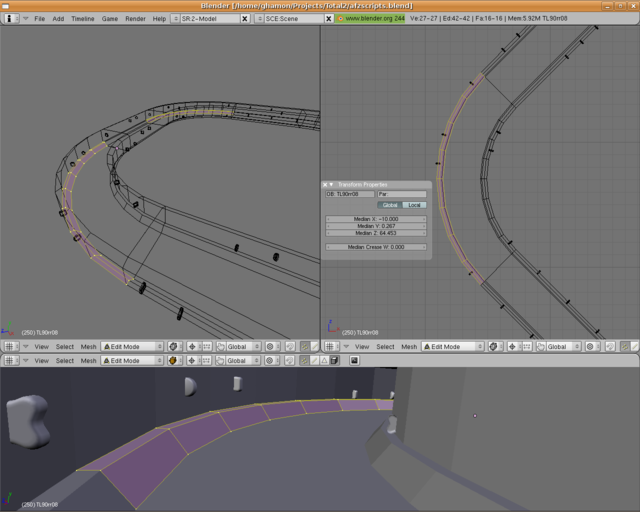

Gaze-contingent Virtual Reality environment

To test the task-relevance of gaze guidance, subjects have to perform a challenging perceptuo-motor task in this Virtual Reality environment.

Design of Virtual Reality environment

The graphics of the Virtual Reality environment are put together in Blender, physics are simulated the NVIDIA PhysX engine, and the Object Oriented Input System is used for navigation.